This post is part of a Series of post which I’m describing a clock-in/out system if you want to read more you can read the following posts:

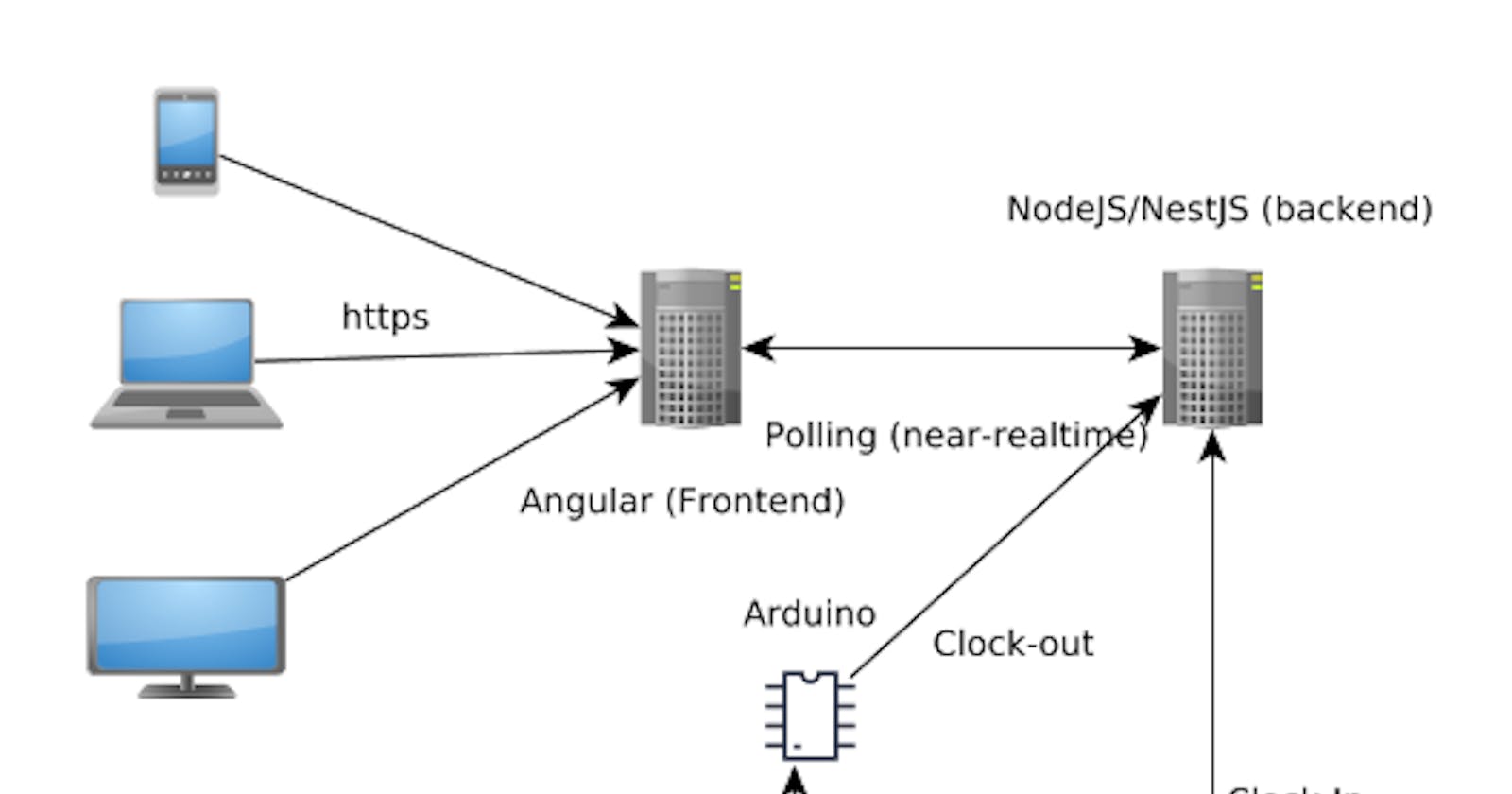

- Part 1. Clock-in/out System: Diagram.

- Part 2. Clock-in/out System: Basic backend - AuthModule.

- Part 3. Clock-in/out System: Basic backend - UsersModule.

- Part 4. Clock-in/out System: Basic backend- AppModule.

- Part 5. Clock-in/out System: Seed Database and migration data

- Part 6. Clock-in/out System: Basic frontend.

- Part 7. Clock-in/out System: Deploy backend (nestJS) using docker/docker-compose

- Part 8. Clock-in/out System: Deploy frontend (Angular 2+) using environments

- Part 9. Testing: Backend Testing - Unit Testing

- Part 10. Testing: Backend Testing - Integration Testing

- Part 11. Testing: Backend Testing - E2E Testing

- Part 12. Testing: Frontend Testing - Unit Testing

- Part 13. Testing: Frontend Testing - Integration Testing

Introduction

When you develop a software application, you frequently code in a development environment. However, sooner or later, you will need to deploy your app in a production environment, while continuing to develop in your development environment.

There are several solutions about the environment’s variables management in node.js but the most popular library is dotenv (a simple tutorial can be read in twilio).

In our case, we’ve develop our backend using the node.js framework NestJS which has a module to management the environment variables using dotenv (NestJS-Config). However, I’ve develop my own nestJS module to manage the NODE’s environment variable without using external libraries.

Finally, our code is deployed using docker's containers, we will create an image from our code, and docker-compose.

Environment’s variables

The first step is develop our EnvModule which load the custom variables from a file. So, it is very important known what's it environment's file which can be passed using the NODE_ENV (or any variable). The second step is to modify the DatabaseModule to load the information from the EnvModule. The NODE_ENV variable will be passed using docker-compose.

EnvModule

I’ve developed an EnvModule, which configures an environment variable, which

will be either default or the NODE_ENVcontent. The next step is defining a

provider, which uses a factory to return the env variable from the environment

file. This provider is exported to be used in other modules.

The interface that is used in the files is the one shown in the env/env.ts file. This configuration is about the database and its password. It is very important to make the PASSWORD in development and in production different, imagine everyone in the company knowing the database’s root password because of such a mistake.

Therefore, the default environment will be the development environment, and production will be the production environment.

Note that the DB_HOST variable is the classic localhost in the default environment and, when the environment is set to production, its value is the name of the machine which contains the PostgreSQL database (this name is assigned by the container).

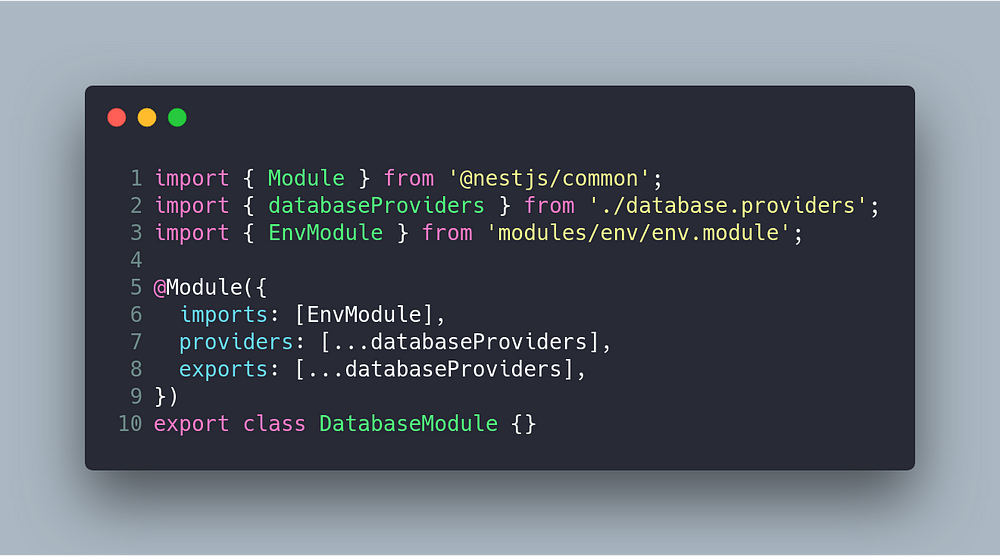

DatabaseModule

The EnvModule exports the ENV provider, which can be imported by DatabaseModule, to configure the databaseProvider. Therefore, the first modification is the DatabaseModule, which imports the module.

Since EnvModule is exporting the provider, it can be injected in the DbConnectionToken provider, which receives the ENV as an argument. Instead of hard-coding the configuration in the provider, it is provided by the service (which is read from the environment file).

At this point, if you want to switch between environments, you can do so by running the following command:

Deploy: Docker and Docker-compose

The idea is using the same environment in develop and production. In this context, Docker is the perfect tool due to it allowing us to configure different containers which switch the configuration using our EnvModule. We need to build our own image, which will be a docker container, and after, this image will be orchestrated using Docker-compose.

Docker

Our dockerfile file is based on the node:10-alpine image due to the project does not need a system library. This image merely copies the source code and installs the dependencies (using npm install).

When you build a docker image it is recommended to use a .dockerignore file, as you would use .gitignore.

Docker-compose

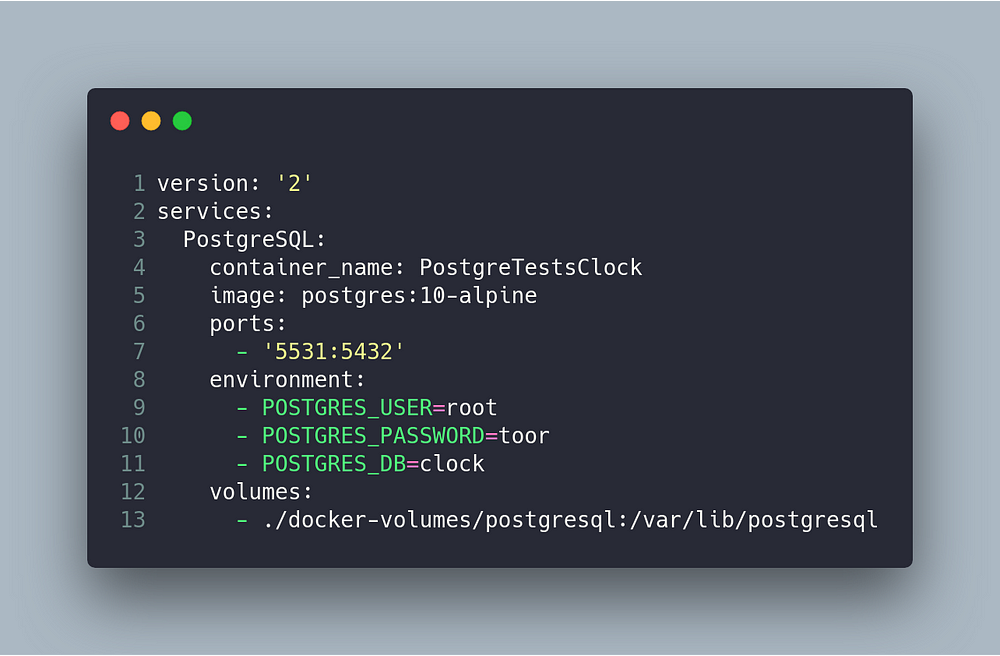

In our project, we have two different docker-compose files. The first is used for our development environment, since docker-compose only manages the DBMS Postgres due to the code being run on our platform by using this npm script: (npm run start:dev). Note that our service is based on postgres:10-alpine.

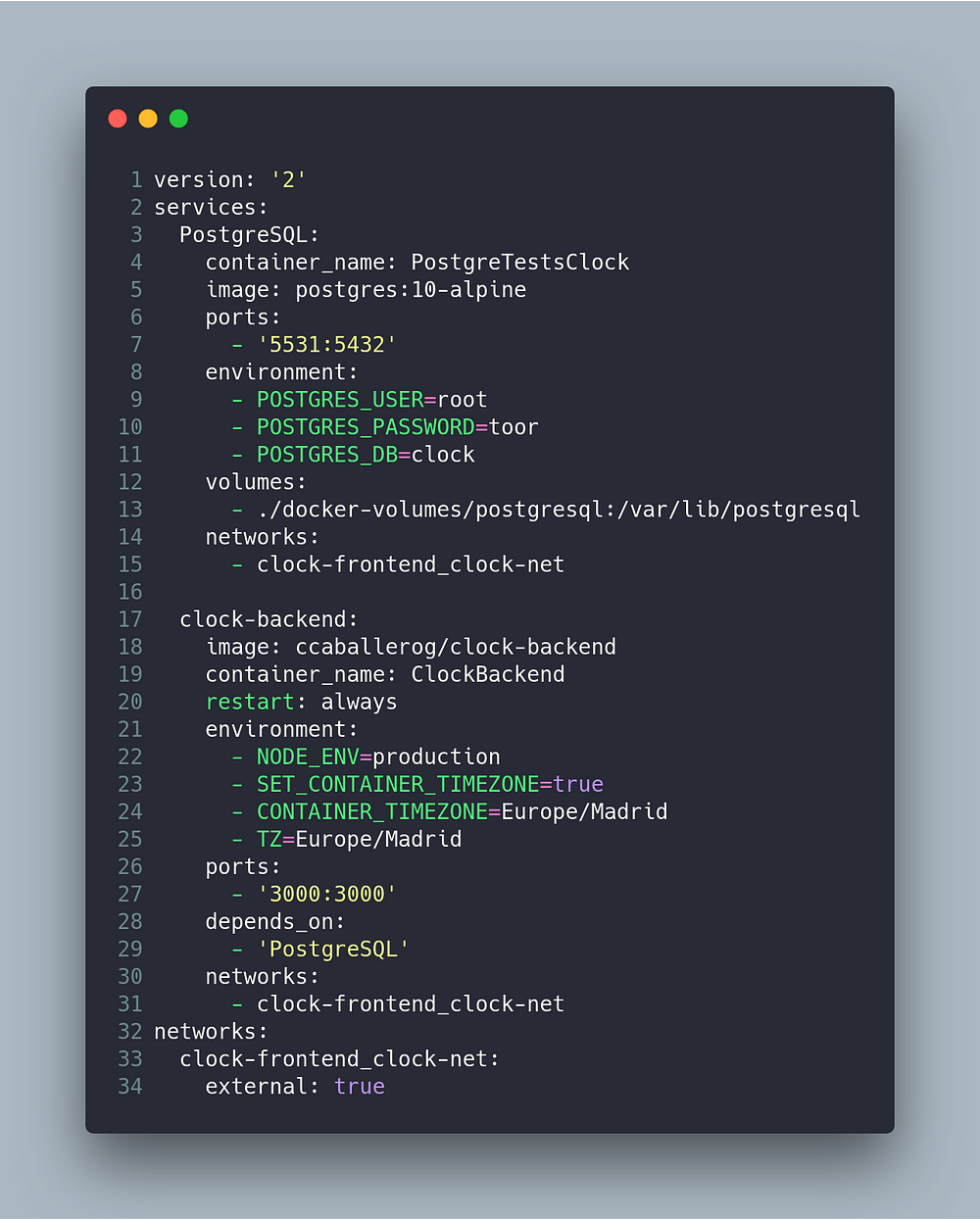

The second script is more complex, because in this case we have a container named clock-backend, based on the ccaballerog/clock-backend image, which was built in the last step. The clock-backend container is required to be aware of the PostgreSQL container. To do this, we could need a DNS server. However, docker-compose facilitates this task, by enablig the use of the networks keyword. Note that both containers have defined the same network (clock-frontend_clock-net).

The clock-backend container has an environment area, in which we've defined both the timezone and the NODE_ENV as production (to load our environment file).

Shell script to deploy

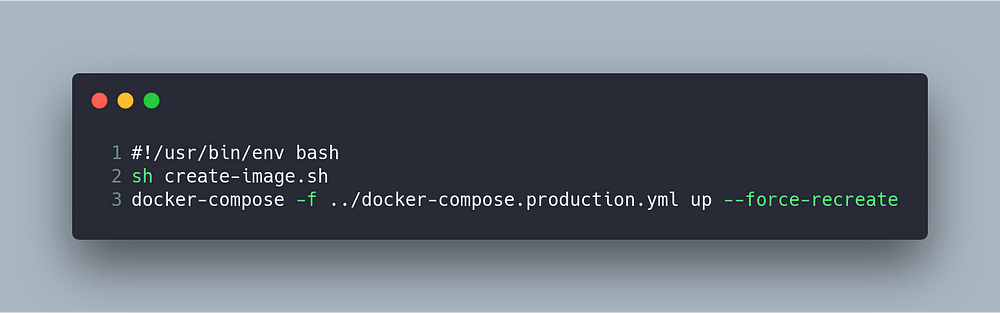

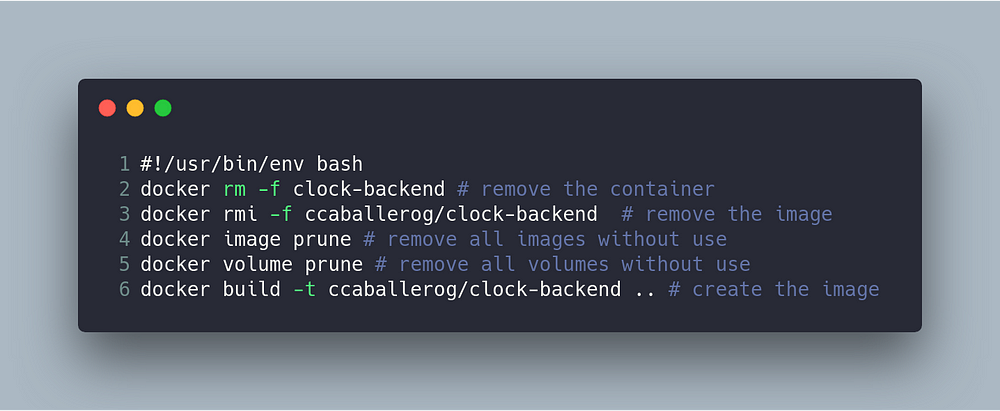

The last step of our process would be to automate the construction and execution of the containers. I have two scripts to do this task; the first script creates the image (removing the image, should there be one) and the second script deploys the code by using docker-compose.

<span class="figcaption_hack">Type caption for image (optional)</span>

<span class="figcaption_hack">Type caption for image (optional)</span>

Conclusion

In this post I’ve explained how you can deploy your backend with NestJS by using docker and docker-compose. The most interesting feature of this code, is the fact that we can load our own environment variables, switching between development and production environments.

Originally published at www.carloscaballero.io on February 1, 2019